A History of Supercomputing because… I Feel Like It – Part Two

Now this isn’t an extensive history, right now I’m just posting major develops. At a later time, I might revise and beef up these posts because it really is cool to see how we have progressed from vacuum tubes to our modern beasts! Onward!

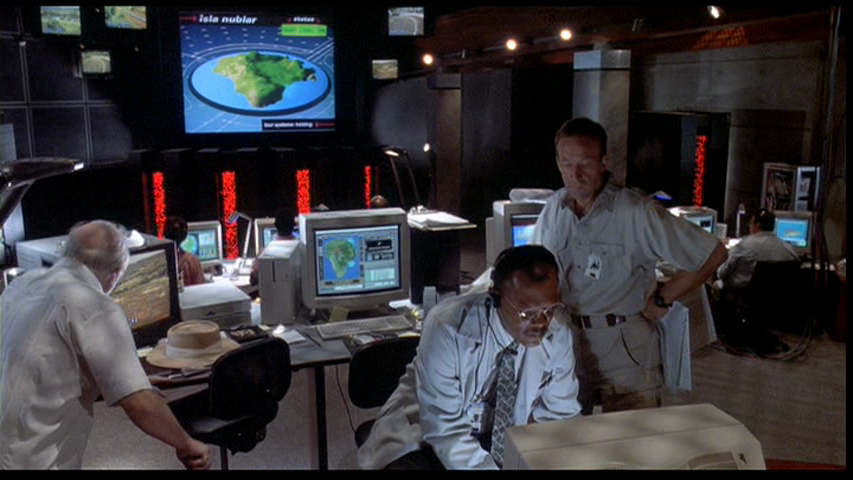

The next design for supercomputers involved individual processors each with their own 4kbit RAM memory (so cute compared to our GB memory now). This was earliest seen in 1985 with Thinking Machine’s Connection Machine 1. This design was an improvement over vector processing and provided a massively parallel infrastructure. Fun fact, the Connection Machine 5 had a vital role in Jurassic Park. It was the supercomputer in the control room!

Cost of vector computers continued to decrease as minicomputers improved their uniprocessor design with the Convex C1.

In 1988, NASA adopted the Cray Y-MP. This new supercomputer could be equipped with 2,4, or 8 vector processors. It peaked at 333 Mflops per processor. Flops standing for floating-point operations per second.

1990, Intel introduced the Touchstone Delta Supercomputer. This device used 512 reduced instruction set computing (RISC) processors. RISC consists of simplified instructions over complex that are believed to increase performance because of the faster execution times.

Their was a brief lull in development around the end of cold war. This slowed down supercomputing progress.

In 1992, Intel continues their Delta line and builds the first Paragon supercomputer. This device consists of distributed memory multiprocessor systems with 32-bit RISC. Also that year, Cray ‘s C90 series reached a performance of one Gflop a big boost in performance.

In 1993, IBM promotes their SP 1 Power Parallel System. This device was based off of the Performance Optimization With Enhanced RISC – Performance Computing (PowerPC) infrastructure. PowerPC later evolved into Power ISA. This architecture was developed by the Apple-IBM-Motorola alliance and has been replaced by Intel in many personal computers but is still popular among embedded systems.

Cray Computer Corporation (founded in 1972) constructs their T3D. This was the corporation’s first attempt at a massively parallel machine. It was capable of scaling from 32 on up to 2,048 processors.

In 1994, the Beowulf Project came into play with their 16 node Linux cluster. NASA used it for their Space Sciences project. The Beowulf Project has since evolved into the Beowulf cluster which consists of a local network of shared devices. Cool thing is that this project has helped to bring high performance computing power to connected personal devices.

Intel’s Accelerated Strategic Computing Initiative (ASCI) Red improved performance in 1997 on the LINPACK Benchmark at Sandia National Labs, reaching 1.3 Tflops. ASCI Blue continued to boost performance on supercomputers, peaking at 3 Tflops in 1998 and ASCI White enabled 7.22 Tflops in 2000.

In 2002, the NEC uses supercomputing to run earth simulations at Japan’s Yokohama Institute for Earth Science. Think of how much data that involves! That same year, Linux clusters become popular, reaching 11 Tflops! I use Linux!

China’s Tianhe-1A, located at the National Supercomputing Center in Tianjin, reaches 2.57Pflops, a huge, huge jump in performance. This became the world’s fastest computer in 2010. This reign was short lived and only lasted till Japan’s K computer by Fujitsu reached 8 Pflops in June of 2011 and 10 Pflops later that November. However, it is still one of the few petascale supercomputers in the world.

Supercomputing is still improving. In January 2012, Intel purchased the InfiniBand product line in hopes of meeting their promise of developing exascale technology by 2018. Wow! Again, more power results in an increase in data processing capabilities. Think of what else we could simulate with this type of technology, how much more detail will we be able examine?

Things are looking up.